This post is part of an essay contest for effectiveideas.org. The prize will “be for the best review of Will MacAskill’s What We Owe the Future. The book is MacAskill’s case for longtermism, the view that positively influencing the longterm future is a key moral priority of our time.”

Last month, Scottish philosopher and ethicist William MacAskill published his book What We Owe the Future. MacAskill has successfully garnered quite a bit of attention from important media outlets like The New York Times, The New Yorker, and BBC. The book provides a layperson’s introduction to the philosophical theory of longtermism—the idea that the long-term future is a key moral priority.

The basic idea rests on a few premises which do not seem particularly absurd when stated plainly: future people matter; there could be a lot of future people; we can influence the future; it is morally good to help people. The conclusion is that we ought to help future people live better lives. How seriously you take this conclusion depends on how strongly you believe in your premises. In a 2019 article entitled ‘Longtermism’, MacAskill distinguishes between different strengths of longtermism:

(i) longtermism, which designates an ethical view that is particularly concerned with ensuring long-run outcomes go well;

(ii) strong longtermism, which, like my original proposed definition, is the view that long-run outcomes are the thing we should be most concerned about;

(iii) very strong longtermism, the view on which long-run outcomes are of overwhelming importance.

While he previously thought longtermism should refer to (ii), he now believes it should refer to (i), in part because it is more intuitively attractive to the public. The idea that the future is important makes sense to most people. The idea that the future matters more than anything else is a harder pill to swallow for most outside of the effective altruist movement. What We Owe The Future has likely seen more success on account of its broad appeal to the intuitions associated with (i). Arguments for the more radical conception in (iii) would likely be offputting to the public, who have yet to embrace basic long-term thinking.

Some of the public may reject the idea that future people matter at all, especially considering there are pressing issues regarding existing people. Some believe that if someone does not exist, we do not have any moral reason to consider their well-being. This view is pithily described by Jan Narveson as being “in favour of making people happy, but neutral about making happy people” (Narveson, 1973). It does not take much creativity to think of a counter-example in which potential people matter. MacAskill provides the following:

To see how intuitive this is, suppose that, while hiking, I drop a glass bottle on the trail and it shatters. And suppose that if I don’t clean it up, later a child will cut herself badly on the shards. In deciding whether to clean it up, does it matter when the child will cut herself? Should I care whether it’s a week, or a decade, or a century from now? No. Harm is harm, whenever it occurs.

It seems hard to advocate for indifference about harming a future child. However, some may reject the idea of moral consideration for future people on the account of partiality—the idea that special people in our lives have special moral considerations—or reciprocity, which is the view that we ought to repay the people who have done good things for us. While these might be real moral concerns, the case is still clear that caring about future people matters. It may not matter as much as caring for your family in the present, but future people are very important.

Even if your country, region, town, or family deserves particular moral consideration, the future could still be more important because the number of potential people is so numerous. We could say that one’s townspeople are a hundred times as important as future people, and we could still reach the conclusion that future people matter more. This is possible because the future could be millions of years and the number of humans could number in the hundreds of billions or trillions. Even if that is very unlikely, we may still want to try very hard to achieve a lasting civilization. It is worth putting up a fight for the preservation of humanity.

Considering the rapid growth of technology, it does not seem particularly farfetched to imagine humans finding other habitable planets or creating space colonies within the next ten thousand years. If that seems inconceivable, just consider what sort of predictions about the future people would make in the year 1000. Some accomplishments of the present would be inconceivable. It is reasonable to think that future accomplishments will be nearly inconceivably impressive should humanity continue to exist.

Unfortunately, it is possible we ruin things if we are not careful. MacAskill analogizes humanity to an imprudent teenager with a potential life span of a thousand years. It is important to make smart decisions as a teenager which put a person’s life on track. Teenagers can make certain choices that reduce the chance that they live quality lives. Perhaps even worse, a wreckless teenager can lose his life if he is not careful. Even a person as brilliant as MacAskill can make critical errors while young.

Other decisions I made mattered a lot. I was reckless as a teenager and sometimes went “buildering,” also known as urban climbing. Once, coming down from the roof of a hotel in Glasgow, I put my foot on a skylight and fell through. I caught myself at waist height, but the broken glass punctured my side. Luckily, it missed all internal organs. A little deeper, though, and my guts would have popped out violently, and I could easily have died. I still have the scar: three inches long and almost half an inch thick, curved like an earthworm. Dying that evening would have prevented all the rest of my life. My choice to go buildering was therefore an enormously important (and enormously foolish) decision—one of the highest-stakes decisions I’ll ever make.

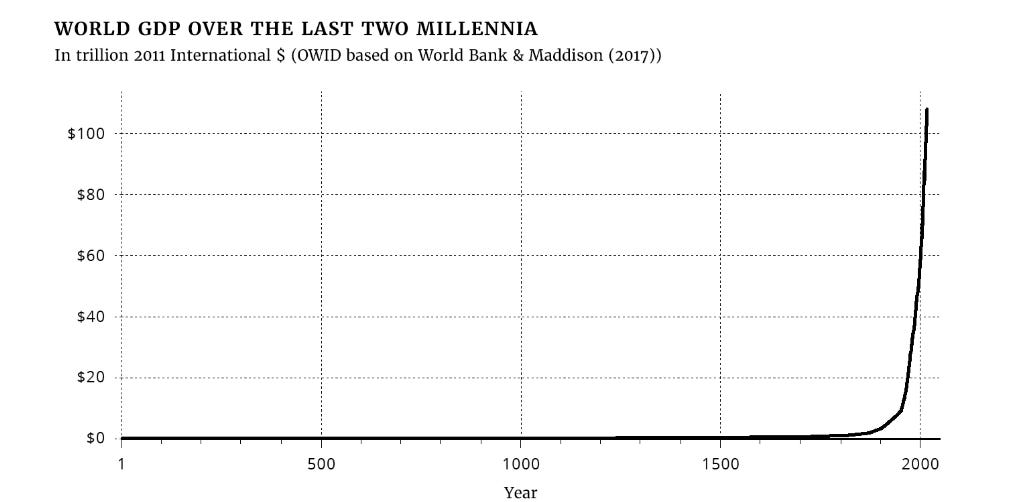

The death of a teenager is particularly sad. While many people might value their early life the most, we can imagine that a teenager who dies prematurely has been deprived of some of life’s greatest joys. We are likely analogous to a teenager with a promising future. The material conditions of humankind are improving rapidly. Industrial and technological revolutions have paved the way for unimaginable levels of comfort, safety, and convenience in developed nations.

One may be concerned that none of this matter because humans do not have control over their own destiny. Do the choices humanity makes to influence the future even matter? If we look at the historical record, we have reason to believe that humans can have a major influence. MacAskill believes that megafauna was killed by human hunting and disruption of the environment. Perhaps even more troubling, Denisovans and Neanderthals were likely eliminated by competition with humans in addition to interbreeding. An alternative world ruled by other hominids could be so different it is difficult to imagine. Humans can be highly influential without trying to be. We can imagine that rather than wiping out species, humans could do a great deal of long-term good. In evaluating doing long-term good, MacAskill wants to evaluate causes on the basis of significance, persistence, and contingency.

Significance is the average value added by bringing about a certain state of affairs. How much worse is the world, at any one time, because the glyptodonts are extinct? In assessing this, we would want to attend to all relevant aspects of the glyptodonts’ extinction: the intrinsic loss of a species on the planet, the loss to humans who could have used their shells or eaten their meat, and the impact on the ecosystems the glyptodonts inhabited.

The persistence of a state of affairs is how long that state of affairs lasts, once it has been brought about. The nonexistence of the glyptodonts may be exceptionally persistent, starting 12,000 years ago and lasting until the end of the universe. It would only fail to be exceptionally persistent if, at a future time, we were to bring them back.

Technology may make this possible. There are current efforts to “deextinct” certain species, like the woolly mammoth, by extracting DNA from their remains and editing that DNA into the cells of similar modern animals, like elephants. However, even if successful, these efforts would not truly bring back the original creatures: instead, they would produce a hybrid—an animal that looks a lot like the extinct animal but is not genetically the same. Should future generations try to bring back the glyptodonts, they would probably face similar challenges.

The final aspect of the framework is contingency. This is the most subtle part of the framework. In English the word “contingency” has a few different meanings; in the sense I’m using it, an alternative term would be “noninevitability.” Contingency represents the extent to which a state of affairs depends on a small number of specific actions. If something is very contingent, then that change would not have otherwise occurred for a very long time, or ever. The existence of the novel Jane Eyre is very contingent: if Charlotte Brontë had not written it, that precise novel would never have been written by someone else. Agriculture is less contingent because it emerged in multiple locations independently.

We could be in a situation in which we can affect highly contingent outcomes of significant importance. It may not be the case that moral progress is an unstoppable force. Take one of the most important steps forward in terms of human welfare—the abolishment of slavery. Was this contingent on certain historical forces or was it inevitable? The same could be asked for the social status of women and the LGBTQ+ community. Certain attitude changes are incredibly recent. While MacAskill initially believed that slavery was inevitable, he changed his attitude while researching the book.

Slavery is so abhorrent that, before getting to grips with the historical scholarship on the topic, I assumed that abolition must have been inevitable. But now I’m not at all sure. Though it’s impossible to know for certain, it’s entirely plausible to me that, were the tape of history rerun a hundred times with slightly different starting conditions, in a significant proportion of those reruns, there would still be legal slavery in many or most countries in the world, even at today’s level of technological development.

This is a horrifying thought. When we open up to considering counterfactual histories, we can imagine a lot of dystopias. For example, MacAskill asks the reader to imagine a world in which Nazism grew in popularity. Perhaps coercive eugenics would be widespread. Although it is worth noting that these terrible practices may not seem as terrible if we were in those cultures in the counterfactual world. There are plenty of practices that we will likely view as abhorrent in retrospect.

If we want the ability to make moral progress and judge our mistakes as abhorrent, it is imperative that we avoid “value lock-in.” We want to avoid the normalization of attitudes that are detrimental to the existence of our civilization and its long-term welfare. The values that we establish today could persist for thousands of years. Consider some important practices and beliefs today that have long histories.

The Babylonian Talmud, compiled over a millennium ago, states that “the embryo is considered to be mere water until the fortieth day”—and today Jews tend to have much more liberal attitudes towards stem cell research than Catholics, who object to this use of embryos because they believe life begins at conception. Similarly, centuries-old dietary restrictions are still widely followed, as evidenced by India’s unusually high rate of vegetarianism, a $20 billion kosher food market, and many Muslims’ abstinence from alcohol.

I believe liberal attitudes toward discarding embryos may be extremely important in the twenty-first century due to the potential benefits of practices like preimplantation genetic testing for polygenic disorders (PGT-P). The importance of this belief for PGT-P may be difficult to explain to early Talmudic scholars. Similarly, it may be important to understand the importance of certain practices in the present. Speculating on complex issues in the future is often going to sound like science fiction. MacAskill prefaces his discussion of these topics with a warning.

This sounds extreme, and as a warning, this chapter will discuss some ideas that will seem weird or sci-fi. But technology is changing rapidly, and technological advances could radically alter the dynamic of moral change that we are used to. When taking the interests of future generations seriously, we simply cannot dismiss major technological advances out of hand. Consider how someone in 1600 would react to the idea that, within two dozen generations, we would be able to make light and fire with the flick of a switch, and would do so dozens of times a day, without a second thought. Or that we could see anyone, anywhere in the world, immediately, in real time, on a device we carried in our pocket. Or that we could fly in the skies, or walk on a celestial body. We simply know that, given continued technological progress, there will be major change over the coming centuries.

The most notable example of a seeming science fiction concern is artificial general intelligence (AGI). The idea is that AGI could potentially be misaligned with human values. Rather than taking care to consider human welfare, an AGI could ruthlessly try to achieve the goal that prompted its creation. This AGI could potentially be more intelligent than anyone living and engage in social manipulation to achieve its aims.

Other concerns include deadly pathogens escaping from laboratories. In this section, MacAskill was able to provide an alarming number of examples of instances in which deadly viruses escaped from research laboratories. In one of the examples, a leak actually occurred twice in the same laboratory. This risk area has achieved some mainstream attention due to the recent COVID-19 pandemic and the potential that it leaked from a research facility in Wuhan.

Another concern is the use of nuclear weapons, perhaps more notable during the Cold War. However, the recent conflict between Ukraine and Russia is bringing to light the possibility of the use of nuclear firearms. These weapons have the capacity to kill a large number of people, but they are unlikely to kill every single person on the planet. If humans survive a nuclear war, it is likely they could rebuild, provided there is still enough technology and human capital. Unlike some might think, Hiroshima and Nagasaki are not desolate wastelands today.

Climate change also poses a threat to human welfare. While some estimates may place future temperatures within a range that is not particularly deleterious to human welfare, some low-probability outcomes at the more extreme end are likely very bad. Since climate change is a collective action problem, it may be difficult to coordinate to reduce emissions. We may need scientific and technological innovation to get widespread adoption of cleaner energy.

Even in the event in which all human beings are not killed, it could be the case that the population is drastically reduced, and it cannot return to similar material and social conditions. In this case, it would be difficult to rebuild what was lost. One policy MacAskill believes may be a good idea is keeping surface-level coal reserves to potentially jump-start a second industrial revolution. Even if we are able to return to our pre-collapse levels of economic productivity, we want to avoid stagnating for long periods of time.

Overall, we don’t know just how long stagnation would last. It’s possible that stagnation would be short, lasting only a century or two, but it’s also possible that it would be very long. Perhaps a stagnant future is characterised by recurrent global catastrophes that repeatedly inhibit escape from stagnation; perhaps cultural norms that are inconducive to progress become globally prevalent and are very persistent; perhaps we end up exhausting all recoverable fossil fuels in a stagnant future and the resulting extreme climate change further impedes growth. If some of these come to pass, then stagnation could potentially last for tens of thousands of years.

As discussed earlier, a crucial premise when considering the importance of ensuring survival and changing trajectory is that future people actually matter. If someone were to reject this idea, these arguments and concerns would carry little weight. While it was already shown that future people matter in some way, it is highly relevant how we evaluate populations. MacAskill gives what he describes as the first public introduction to population ethics, a discipline pioneered by the influential philosopher Derek Parfit. First, it must be noted that we cannot be neutral to the creation of happy people. Although this intuition is appealing, it leads to absurd conclusions.

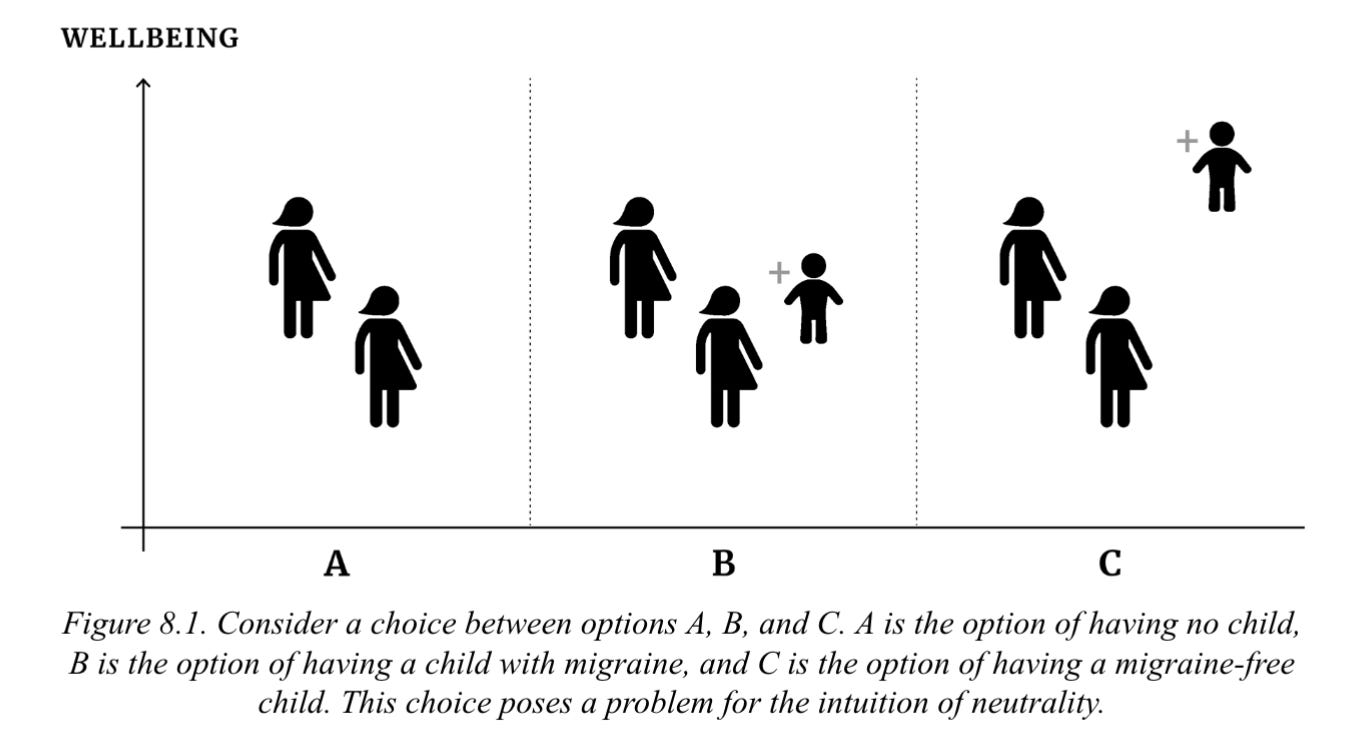

Suppose that a couple are deciding whether or not to have a child. Because of a vitamin deficiency that the mother is currently suffering from, the child they conceive will certainly suffer from migraine: every few months, for their entire life, they will suffer a debilitating headache and have fatigue and brain fog for several days afterwards. But other than this, the child will live a good and full life. According to the intuition of neutrality, it is a neutral matter whether or not these parents have this child: the world is equally good either way.

Now suppose that the parents also have the option of having the child a few months later. At that later point, the mother will no longer suffer from the vitamin deficiency, and the child they conceive will not suffer from migraine as a result. Let’s call the option of having no child “No Child”; “Migraine” is the option of having a child with migraines; and “MigraineFree” is the option of having a child without migraine (Figure 8.1).

It seems obvious that, as long as there are no other considerations in play, if the parents have the choice, they should choose to have a child that is migraine-free over a child with migraine. That is, Migraine-Free is better than Migraine. But if so, then the intuition of neutrality must be wrong: having a child cannot be a neutral matter.

I argue against the neutrality intuition in my article “Population Ethics Meets Genetic Enhancement.” I chose a very similar example from Savulescu (2001). MacAskill’s example appears rather contrived, but the coming adoption of genetic enhancement technology will give rise to all sorts of real-world ethical dilemmas such as this. We cannot be neutral about the creation of happy people.

Another intuition that is seemingly plausible is that we ought to maximize the average welfare level. Under this view, creating people whose lives are above average is morally good, and creating people below average is morally bad. Once again this leads to even more counter-intuitive conclusions:

First, if the world consisted of a million people whose lives were filled with excruciating suffering, one could make the world better by adding another million people whose lives were also filled with excruciating suffering, as long as the suffering of the new people was ever-so-slightly less bad than the suffering of the original people. (This is a thought experiment that Parfit presented and referred to as “Hell Three.”) If the original one million people have −100 wellbeing, then in the average view, adding a further million people at wellbeing level −99.9 is a good thing because it brings up the average. But this is absurd.

Population ethics is a particularly difficult area. Whenever you believe that you have come up with an intuitive solution to avoid unwanted conclusions, someone can find a counter-example with something that is very unwanted. I would argue that the Total View is the most coherent and acceptable viewpoint, even though it results in what is called the Repugnant Conclusion—“For any possible population of at least ten billion people, all with a very high quality of life, there must be some much larger imaginable population whose existence, if other things are equal, would be better even though its members have lives that are barely worth living” (Parfit, 1984).

Some reviewers focused on this part of the book particularly. For example, Scott Alexander disagreed with MacAskill’s views on population ethics in his article “Book Review: What We Owe The Future.” Alexander initially took what I viewed as a dismissive attitude toward discussing these sorts of issues. He did not want to embrace the Repugnant Conclusion and came up with different possible solutions. I believe that I demonstrated why his different population ethics are incorrect and the Repugnant Conclusion should be accepted in my response articles “In Favor of Underpopulation Worries,” “Scott Alexander, Population Ethics, and Playing the Philosophy Game,” and “Population Ethics Meets Genetic Enhancement.”

In addition to population ethics, it is of critical importance to MacAskill’s theory that we expect the future to actually be better given our efforts. We would not want to produce people who will suffer tremendously. MacAskill uses some positive psychology studies to evaluate the amount of time people spend being happy, which was rather interesting. He ends up endorsing the view that the bad can be worse than the good can be good. However, he believes there will be more good than bad in the future. He believes the expected value for the future is positive and that “[w]e have grounds for hope.”

Overall, I think that the book is a good introduction to the ideas, and it is broadly appealing enough that you could suggest it to a person totally unfamiliar with effective altruism. Surely, MacAskill recognized that choices made when writing this book could be incredibly important from a long-term perspective and was careful about what he chose to include. I would say that he made good choices. I hope humanity listens and makes good choices as well.

I totally forgot I hadn't finished reading this. On fundamental issue that strikes me is the inappropriate metaphor of humanity as teenager.

Teenagers do stupid stuff because they don't know any better, and might make bad trades regarding current good against future good. Ok, fine, that makes sense.

The trouble starts in that teenagers have the example of adults to tell them what is a good idea vs a bad idea in terms of trade offs. Humanity doesn't have another species to listen to about "Oh, yea, you don't want to be doing that! I did that and really regretted it." We're it. We don't know what the good outcomes are, or what the bad outcomes are, or really how to steer from one to the other, and no one can offer advice because we don't have another species' experiences to work with. All we have is our own history and our own intuitions about the future. In other words, humanity is an adult, trying to figure things out as we go along.

Which leads to the second problem, one very close to human experience: adults treating other adults as teenagers (minors) never goes well. MacAskill puts himself in the position of advisor to the human species, the species he views as a teenager. MacAskill, in other words, puts himself in the position of the older, successful non-human species offering advice to the screw up humans.

It takes a certain amount of hubris for an adult to tell another adult how to run their lives as though they were minors. It perhaps takes a lot more to tell an adult species how to run its life as though you were a member of a species that is also an adult.

I am assuming, here, that MacAskill is not some sort of Eldar or something. Maybe I am wrong.

Great article!

I’m not sure about the concept of “value lock in.” We don’t have information on the best values for future centuries nor are we in a position to make reasonable predictions.

It seems more important to maintain institutions and values that allow for dialogue and reassessment, like protecting free speech and promoting authenticity, rather than establish eternal values.