Contra Dynomight on Ethics

A response to Dynomight's response to “Just Say No to Utilitarianism”

I. Dynomight on Ethics

Recently, Arjun Panickssery wrote an article entitled “Just Say No to Utilitarianism” in which he argues from an ethical intuitionist perspective that both libertarian natural rights theory and utilitarianism are incorrect. I agree with him. In fact, I would even argue in a similar fashion as Panickssery. It’s unsurprising to see that he was influenced by Bryan Caplan and Michael Huemer, just as I’ve been. Dynomight responds to Panickssery in an article entitled “Why it's bad to kill Grandma.” I will try to summarize, but you can read the article if you want to get the full picture.

Dynomight ponders the metaethical question of the purpose of ethics and concludes that concepts like fairness and justice do not really exist “in the sense that you can’t derive them from the Schrödinger equation or whatever.” He believes that they are “instincts that evolution programmed into us.” Despite believing intuitions are a consequence of evolution, he says that “when [he thinks] about a child suffering, [he doesn’t] care about any of that”; he says, “while I can see intellectually that right and wrong are just adaptations, I really do believe in them.”

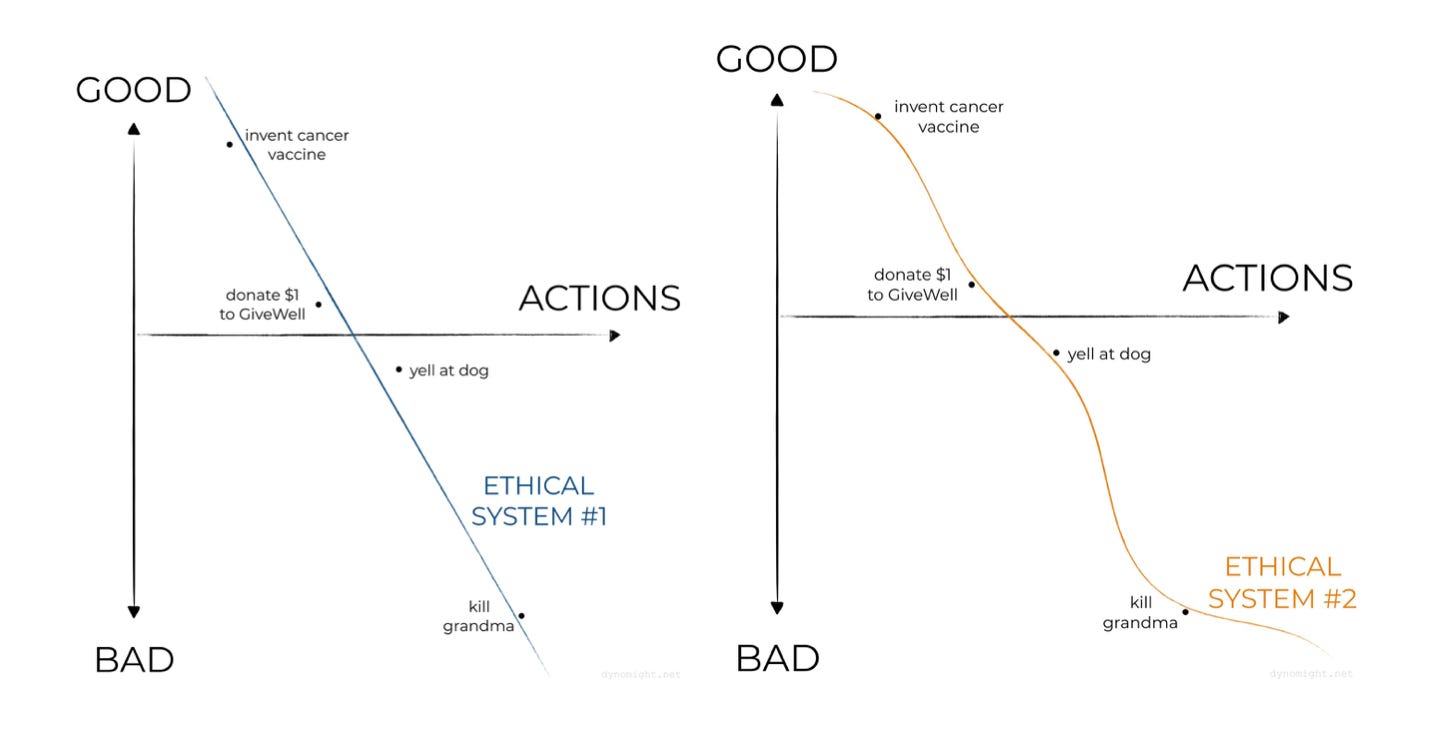

Dynomight believes that we should “look for a formal ethical system” because “it’s practically useful to do so.” He provides a number of graphics to illustrate the point that a simple ethical system—depicted as linear—might not result in outcomes that perfectly match one’s intuitions, but a more complex ethical system—depicted as a wiggly line—“might not seem quite as trustworthy.”

In Dynomight’s view, a useful ethical system “mostly agrees with your moral instincts” and is “simple/parsimonious.” The first justification is that it’s useful for extrapolation because it is “sensible to rely on a simple system that fits with the things we’re sure about.” The second justification is that “it’s useful for conflict resolution” because it’s difficult to solve an ethical conflict by appealing to irreducible moral instincts. The third justification is that it’s “useful for future-proofing.”

II. Parrhesia on Ethics

I believe in Michael Huemer’s principle of Phenomenal Conservatism: “If it seems to S that p, then, in the absence of defeaters, S thereby has at least some degree of justification for believing that p” (Huemer, 2007). In other words, if we don’t have a good reason to doubt our intuitions, then they provide us with at least some evidence. Rejecting non-inferential knowledge leads to some absurdities (Parrhesia, 2022).

We can extend the idea to the area of morality and accept ethical intuitions as a legitimate source of knowledge. Ethical intuitionism “holds that moral properties are objective and irreducible” and “at least some moral truths are known intuitively” (Huemer, 2005, p. 6). For example, if it seems like torturing babies is wrong, that’s at least some reason to think it’s wrong. Even the consequentialist Scott Alexander agrees that “moral theories must end up grounded in our moral intuitions for them to work” (Alexander, 2011).

All our abilities are a consequence of evolution. The fact that evolution gave us intuition and perception to apprehend the world around us is reason to doubt that our intuition and perception are always perfect, but it is insufficient to reject all non-inferential knowledge outright. Similarly, the fact that we only have moral intuitions on account of evolution is a reason to doubt our intuitions are always perfect, but an insufficient reason to reject all non-inferential ethical knowledge outright.

Either intuitions are probative, or they are not. If they are, you should accept moderate deontology, and, if they are not, you should be a moral skeptic (see: Huemer’s utilitarianism debate). However, there are some intelligent defenders of the idea that ethical intuitions are probative and that we should accept utilitarianism such as Bentham's Newsletter and Scott Alexander.

If Dynomight believes that ethical intuitions—such as the belief that child suffering is wrong—are merely evolutionarily adaptive instincts, he must take the stance that intuitions are not probative—they do not afford us proof or evidence of any kind. If intuitions do not afford us evidence, they are either useless or misleading.

If our moral instincts are either useless or misleading, we should absolutely not incorporate them into our ethical system. Analogously, if we were researchers trying to figure out whether an economic policy increases GDP and we learned that literally all of our data was randomly generated, we should not use the data at all. Theories must be built using true evidence. And so, I do not believe that “an ethical system is useful when it mostly agrees with your moral instincts” if moral instincts are “just adaptations.”

Dynomight seeks to formulate an ethical system that is “practically useful.” The essential question is: practically useful for what? If we wish to maximize goodness, we need some conception of goodness. If we reject intuition’s ability to provide us with a sense of what is good, then we must have some system for determining what is good. But Dynomight says that “Fairness and justice don’t ‘really’ exist, in the sense that you can’t derive them from the Schrödinger equation or whatever.” It would appear that Dynomight may reject the idea of objective moral truths.

If there are no objective moral truths, moral statements are either incoherent or false. There is nothing to use to build a moral system. You can’t build a house with no materials. You can’t assemble a puzzle without any pieces. There is no there there. To drive home the point: If I formulate a theory that is literally just “do the opposite of whatever Dynomight prescribes,” it would be just as true as his theory, namely 100% false.

If there are no objective moral truths, even if we have reason to value “extrapolation” and “conflict resolution,” all extrapolations and resolutions would be incorrect. And if there are no objective moral truths, I don’t think we have any reason to value future-proofing. I also believe that Holden Karnofsky’s Future-Proof Ethics suffers from the same issue of lacking an objective foundation (Parrhesia, 2022).

Karnofsky uses intuition to form the belief that we should reject intuitive reasoning. His argument is circular. If you want to use this approach to create an ethical system, you should first formulate metaethical criteria, which you will use to formulate ethical prescriptions and beliefs. You then formulate ethical prescriptions and beliefs. You cannot use ethical beliefs as evidence of the validity of a metaethical criterion because the criterion is supposed to precede ethics and serve as a justification for the ethical beliefs.

I will illustrate the issue with Dynomight’s example. Why should we want to future-proof? Take an example: Spartans had bad ethics. How do we know Spartans had bad ethics? Their ethical system was wiggly. How do we know a wiggly ethical system is bad? Because we want a parsimonious ethical system. Why do we want a parsimonious ethical system? Because we want a system that is useful for extrapolation, conflict resolution, and future-proofing. How do we know we want a future-proof ethical system? Take an example: Spartans had bad ethics. And so on. You see the issue. Maybe you could get around it by saying that spartan ethics is bad because their killing is clearly bad, but then you are accepting ethical intuitions.

To further illustrate the point: Imagine that I want to prove that I am good at math. I know I am good at math because my friend Mark verified that my math homework was well done. How do I know that Mark is capable of verifying that my math homework is well done? Well, I verified Mark’s math homework. How do I know my own opinion on math is worthwhile? Well, Mark verified that I’m good at math. How do I know that I’m good at math? Mark verified it!

If you do not accept an objective foundation of knowledge, you will have an infinite regress or circularity. You must accept non-inferential knowledge to avoid this. In ethics, this means that intuitions provide us with evidence of moral facts. If you take nothing else from this section, remember this: Intuitions are a consequence of adaptations, but if intuitions are “just adaptations,” they are useless or misleading. If they are useless or misleading, you do not want to form an ethical system that “mostly agrees with your moral instincts.” Do not use bad data.

III. Dynomight on Not Killing Grandma

Grandma: Grandma is a kindly soul who has saved up tens of thousands of dollars in cash over the years. One fine day you see her stashing it away under her mattress, and come to think that with just a little nudge you could cause her to fall and most probably die. You could then take her money, which others don’t know about, and redistribute it to those more worthy, saving many lives in the process. No one will ever know. Left to her own devices, Grandma would probably live a few more years, and her money would be discovered by her unworthy heirs who would blow it on fancy cars and vacations. Liberated from primitive deontic impulses by a recent college philosophy course, you silently say your goodbyes and prepare to send Grandma into the beyond.

Dynomight believes that the anti-utilitarians are right about many things including that “you shouldn’t kill grandma,” “few professed utilitarians would kill grandma,” and “if utilitarianism tells you to kill grandma, that’s a big strike against utilitarianism.” However, Dynomight is skeptical that utilitarianism demands you to kill grandma. He notes that the example is constructed in a way to avoid downstream effects, but says, “You could argue about how plausible [these] assumptions are, but I won’t because I believe in not fighting the hypothetical.”

The issue with grandma-murder is that “nobody wants to live in a world where you kill grandma.” If you legalized the taking of organs in a perfect society he calls “Utilitopia,” then citizens might be “a touch nervous about the fact that they or their loved ones could die at any time.” Dynomight says that his argument is “sort of like this”: “PEOPLE LIKE COMMONSENSE MORALITY → COMMONSENSE MORALITY CREATES UTILITY → COMMONSENSE MORALITY IS UTILITARIANISM, Q.E.D.” Dynomight suggests that we should take into account higher-order consequences and that “[p]eople’s horror at killing grandma is a real thing.”

Dynomight believes that “commonsense morality is an OK-ish utilitarianism.” Our moral instincts know about higher-order issues. He believes our “instincts are tuned to maximize our descendants, not to maximize total utility. But we may be a bit lucky.” Dynomight mentions Huemer’s example about the friend on the death bed:

On his death-bed, your best friend (who didn’t make a will) got you to promise that you would make sure his fortune went to his son. You can do this by telling government officials that this was his dying wish. Should you lie and say that his dying wish was for his fortune to go to charity, since this will do more good?

Dynomight argues that “If people routinely break promises, who will trust those promises?” He also gives another example from Michael Huemer:

You have a tasty cookie that will produce harmless pleasure with no other effects. You can give it to either serial killer Ted Bundy, or the saintly Mother Teresa. Bundy enjoys cookies slightly more than Teresa. Should you therefore give it to Bundy?

His response is that “Maybe there’s some benefit to knowing that if you kill lots of people, everyone will stop being nice to you?”

IV. Parrhesia on Dynomight on Not Killing Grandma

Some people do actually want to “live in a world where you kill grandma,” most notably the people’s lives that are saved on account of the money being contributed to a charity. It’s stipulated that “many lives are saved.” While “horror at killing grandma is a real thing,” it’s not very plausible that it outweighs the good produced from saving many people’s lives. The horror of murder is very serious, but surely it can’t produce more disutility than saving several lives. In that case, maybe we should not flip the trolley switch because it would be so emotionally difficult for the flipper to deal with it.

If you are utilitarian and you think not killing grandma is the morally correct action, consider this scenario: you work at an effective charity and have full access to the account, and nobody would know if you took the money. Your grandmother recently died, but a new technology could resurrect her if you act quickly enough. You can’t afford to pay yourself, so you take $45,000 from the charity and account. Your grandmother is resurrected and gets to live a few more years, but it comes at the cost of 10 human lives. Obviously, you shouldn’t do this even if you felt more emotional anguish at your grandmother dying in this scenario than in the killing grandmother scenario. I think that the desire to not kill grandma, but willingness to not steal to save grandma is a consequence of the intuition that there is a distinction between omission and commission (Parrhesia, 2021).

Dynomight says that “[t]rying to give people what they want is literally the definition of utilitarianism.” However, the definition doesn’t suggest that we should give some people what they want if it comes at a loss to net human welfare. It seems hard to imagine killing grandma and donating being less welfare-maximizing than not. That is unless you modify the scenario to be either legally enforced or societally sanctioned, but those are totally different scenarios.

The grandma situation is constructed in such a way that you get away with it and it doesn’t contribute to a societal norm of killing grandma. The organ harvesting example is also meant to be a covert activity and not something that is universal and legally enforced. If you legalize the practice, as Dynomight discusses, of course, you are going to get higher-order effects. The goal of many of these thought experiments is to isolate intuition from higher-order effects to analyze it closely. To talk about a scenario in which these practices are legalized is a totally different thought experiment. Stealing bread to feed your family once and legalizing food theft are not equally defensible. You can’t refute the former by refuting the latter.

Dynomight argues that you shouldn’t break the death-bed promise because “If people routinely break promises, who will trust those promises.” But that’s not the scenario. It’s breaking this one promise, this one time, and in these specific circumstances. That’s not even an appropriate way to generalize. Why generalize without the specification of “breaking deathbed promises when many lives could be saved?”Imagine I objected to diverting the trolley by saying that “if people routinely killed innocent people, what kind of society would we live in?” Does pulling the trolley switch ever-so-slightly contribute to the normalization of killing people? Maybe. Is it clearly outweighed by saving four additional human lives? Of course.

Lies only contribute to the overall decline in trustworthiness if the lies are detected in some way. The scenario is constructed in such a way that the lie will not be detected. Regardless, I could reconstruct it to say “and nobody is anywhere around to know what he actually said and you are an exceptionally good liar.”

Generalizing to a whole society or legally enforcing actions is going to result in some wildly different conclusions. Imagine that I tried to do that with what I’m doing right now. It must be immoral to write a substack article because if everyone was writing a substack article, then nobody would be feeding their babies, operating nuclear power plants, performing surgeries, watching over hospital patients, and making sure planes don’t crash into each other. Millions would die if everyone was writing a substack article, and so what I am doing must be immoral. Utilitarians should evaluate actions given present conditions rather than speculating about the long-term effects if everyone engaged in the action.

V. Dynomight on Small Concerns

In response to the objection that a one-time killing doesn’t make a routine practice, he says:

This last objection is similar to the situations that Derek Parfit worries about: Perhaps it is pointless to donate a liter of water to be divided among 1000 thirsty people since no one can sense the difference of 1 mL? I think this is silly. If enough people donated water, there would clearly be an effect. So unless there’s some weird phase change or something, there’s got to be an effect on the margin.

Dynomight concludes his article by saying that you could imagine a world where “1. people didn’t mind the idea that they or loved ones could be sacrificed for the greater good at any time, and 2. people could always correctly calculate when utility would be increased, and 3. no one would ever abuse this power” He says this doesn’t prove utilitarianism is wrong and provides his own contribution to the “arbitrary contrived thought experiments” game:

Grandma is a kindly soul who after a long and happy life is near the end of her days. One morning, Satan shows up in your bedroom and says, “Hey, just wanted to touch bases to let you know that Grandma is going to fall down the stairs and die today. You’re welcome to go prevent that, but if you do, I’ll cause 10% of Earth’s population to have heart attacks and then writhe in agony for ten millennia. Up to you!”

You know that everything Satan says is true. Is it right to let Grandma fall down the stairs?

VI. Parrhesia On Small Concerns

I think that this is disanalogous because we are talking about moral tradeoffs which involve different considerations. Obviously, 1 mL of water being given is better than 0 mL, so this is trivial. What if you had to punch someone in the face for every 1 mL of water? Now, we have a complicated ethical scenario that involves tradeoffs like the other scenarios. It’s hard for me to imagine that a single lie contributing ever-so-slightly to societal distrust is worth more than even one single human life. In another scenario, wouldn’t you lie to an ax murderer to prevent them from killing an innocent person? I think almost everyone would, even if it contributed to normalizing lying to a very small degree.

Dynomight says that “1. people didn’t mind the idea that they or loved ones could be sacrificed for the greater good at any time, and 2. people could always correctly calculate when utility would be increased, and 3. no one would ever abuse this power. You might need more stipulations, but you get the idea. With enough assumptions, a utilitarian eventually has to accept that it’s right to kill grandma.” Why such an incredibly high standard? The only standard that needs to be met is expected utility maximization, and I believe that’s met by the original scenario. If not, give a number of lives saved by killing grandma which makes it okay. There must be a number. It is a bit odd that a threshold was never established that would make it okay.

With regard to the final thought experiment, I think it would be okay to not intervene. Although we have a duty to help our family avoid something terrible like dying, this duty can be outweighed by large enough considerations. This is in line with my moral beliefs and doesn’t pose too much of an issue for me.

VII. In Praise of Bentham’s Bulldog

I think that people like the simplicity of utilitarianism, and that the desire for parsimony weighs very strongly. Dynomight even uses it as a desirable metaethical property. I too have this urge, but it doesn’t seem particularly well-founded in my view. Perhaps it is just the case that ethics is not so parsimonious.

Or maybe I’m wrong on this. Betham’s bulldog has some articles addressing Huemer’s objections to utilitarianism, but he takes a different approach. Bentham accepts intuitions as a source of moral knowledge, but he believes that utilitarianism is true and that other intuitions are distortions in thinking. He uses thought experiments to further break down intuitions to show why the utilitarian choice is correct. See his article on the organ harvesting example in which he responds to the question of whether it is moral to harvest organs by saying “of course” and providing an in-depth explanation of why he thinks it’s good to take the organs. I am impressed by his bullet-biting ability. If utilitarianism is true, I think this is the right approach.

Appendix

Dynomight responds below:

Hey there, just saw this! I'll just make two comments:

1. I'm definitely in the ethical intuitions camp. (We know that the Spartans killing the Helots was wrong because it's "obviously wrong".) But I don't think we can treat all possible ethical intuitions equally. After all, if we did that, how can we say the Spartans were wrong?

2. Since you accept the final "letting Grandma fall down the stairs" scenario, I think most of our disagreement comes down to how much harm to our norms/precedents killing grandma would actually cause. I really do maintain that it would be a lot, enough to outweigh the benefit in terms of lived saved. But it seems to me that neither of us have particularly strong arguments on either side here.

Good article. I especially like the part where you praise this Bentham's bulldog guy!

Hey there, just saw this! I'll just make two comments:

1. I'm definitely in the ethical intuitions camp. (We know that the Spartans killing the Helots was wrong because it's "obviously wrong".) But I don't think we can treat all possible ethical intuitions equally. After all, if we did that, how can we say the Spartans were wrong?

2. Since you accept the final "letting Grandma fall down the stairs" scenario, I think most of our disagreement comes down to how much harm to our norms/precedents killing grandma would actually cause. I really do maintain that it would be a lot, enough to outweigh the benefit in terms of lived saved. But it seems to me that neither of us have particularly strong arguments on either side here.